This document gives a design for a PWM solar charge controller suitable for use with 12V lead-acid batteries. It is able to monitor battery voltage, charge current and load current, and manage the charge process appropriately. The current capacity of the charger depends upon the choice of MOSFETs and cooling arrangements, but even without heatsinks it can handle ten amps. The controller uses only low-cost components, including either an ATMega328 or Arduino as a microcontroller, and is suitable for either hobbyist building or mass-production. A controller of this type is suitable for a small solar power installation of three hundred watts or less as might be found on an outbuilding, boat, RV, or a very small off-grid home.

This project is based in part on one I designed and built previously, a PWM charge controller utilising an arduino and an INA219 voltage-current sensor. The of this design was comparable to any commercial PWM controller of similar price, but had some drawback I decided needed addressing. The INA219 chip made it impossible to monitor load or charging currents separately without a second chip, preventing the inclusion of an over-current load disconnect ability, and the opto-isolator MOSFET drive used more current than ideal and ran the MOSFET dangerously close to maximum Vgs. The prototype also failed during a thunderstorm, with the INA219 starting to read only as zero or full-scale voltage, which raised concerns about susceptibility to atmospheric electricity. This design is an attempt to correct these flaws

Though low cost to construct, this design is a capable charge controller for small-scale, 12V installations up to 200W as may be often seen on outbuildings and mobile homes. It supports all of the essential features, except for the blocking diode - for reasons explained below, I prefer to keep this as a separate unit installed inline to the panels.

For ease of understanding and manufacture, this design can be split into a number of sections which can each be assembled and tested independently before being connected fully. As it is designed to be hobbyist-friendly, it can be assembled entirely using through-hole components on stripboard - there is no need for PCB fabrication or high-skill SMD soldering. All power MOSFETs are N-channel type, which allows for lower Ron and so reduced energy loss and increased current handling ability.

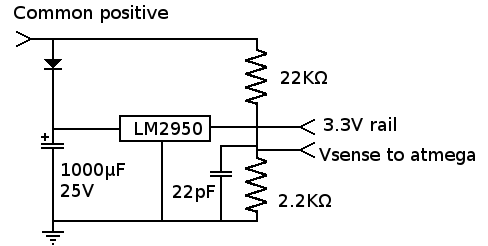

The control section is designed to use either an arduino pro mini (8 or 16MHz), or a bare ATMega328 with the internal RC oscillator - the latter is more suited if you intend to mass-produce these, the former for development and hobbyists. It includes not just the processor, but also the 3.3V regulator and the voltage divider used for measuring battery voltage. This regulator must be capable of a high level of precision in order to accurately monitor battery voltage. Not all regulators can do this. The 'official' Arduino Pro Mini 3.3V uses a MIC5205 regulator, which is capable of sufficient accuracy at a maximum current of 100mA, but is still unsuitable as it has a maximum input voltage of only 16V: No good. A suitable regulator is the LP2950 - 3.3V, high-precision, 30V max input. The current consumed by this charge controller is very small, so there would be no significant advantage in a switching supply.

The 1000μF capacitor on the regulator input is not just for smoothing out noise: It stores enough power to run the electronics for 300ms at 40mA draw, which is enough for a display. This is part of the over-current cutoff feature, preventing the microcontroller from resetting during a brief loss of power. 300ms is more than long enough to detect the over-current condition and shut off the load.

The control board has three analog inputs (input current, output current and compensation) and two digital outputs (Input switch and output switch) that are core to the function of the charge controller, plus whatever inputs and outputs are required for the controls (A minimum of one button, but you are free to add whatever interface component you wish, subject to power constraints). The input and output switching boards are all designed to accept simple 3.3V logic, so the control board is really nothing more than a microcontroller and the most basic power supply.

At a minimum, this controller needs a single button as a user interface (To reset power after over-current shutdown). The addition of an OLED or LCD display is strongly advised to display battery voltage and currents, but not strictly required. If you want you can go over-the-top and do something with analog meters and an elaborate steampunk-styled carved wooden case - but don't go over 100mA on the regulated 3.3V rail, or the LM2950 will not assure properly regulated output. The firmware I have written assumes an I2C-interfaced 128x64 OLED display.

The divider should be a ratio of at least a ratio of 1/8. This corresponds to a maximum measurable voltage of 26V - the voltage which may be encountered during fault condition, if the battery is disconnected while the panels are under optimal lighting condition. The ratio does not need to be exact - there is no need for 0.1% resistors, because the exact ratio can be measured as part of the initial calibration process and configured into the firmware. I used a 2.2KΩ and 22KΩ resistor.

If you are using an arduino, there is very little to this board: Regulator, divider, capacitors, diode, that's it. Connect to the following pins:

| Via | A0 |

| Voa | A1 |

| Vcomp | A2 |

| Vbatt | A3 (From divider) |

| Display SDA | A4 (optional) |

| Display SCL | A5 (optional) |

| Control button | D4 Uses internal pullup |

| Output enable | D7 |

| Charge enable | D3 (Or D9, a lower-frequency operation, not tested) |

| Power | From regulator |

| Ground | Lowest point in circuit: Junction of input shunt and MOSFET. |

If you are using a bare ATMega328, same thing but with an additional reset button and pin headers for programming.

During development I used an Arduino uno for testing purposes. If you want to do that, no problem - just connect the 3.3V line from the regulator to Vref on the Uno and adjust the firmware for external reference.

In order to properly charge a lead-acid battery, both battery voltage and current must be measured - the voltage to within a high degree of accuracy, the current less so. While my previous design used an INA219 chip, this component is not available in through-hole form and can be difficult to obtain, and did not provide the ability to also monitor current from the input or to the load. The failure of this component in a previous prototype also raises concerns.

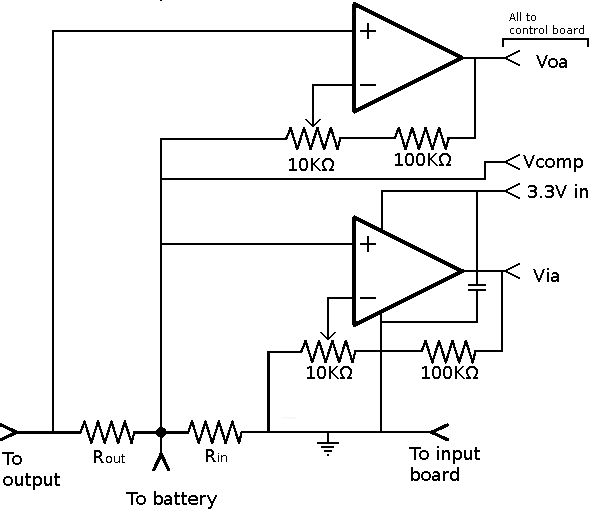

Bidirectional current shunt measuring is difficult - it requires a more expensive op-amp specialised to the purpose or a differential ADC such as the ADS1115, which is not available in through-hole form. With some creative shunt placement, calibration and software compensation it is perfectly posible to gather all the required measurements instead using two unidirectional shunts - this needs nothing more than a very common LM358 dual op-amp and a few resistors.

My solution uses two shunts, producing output voltages Vi (input) and Vo (Output). The LM358 amplifies these into the range required for the ADC, 0-1.8V. Note not 3.3V: While the LM358 can accept an input at ground and swing the output to within 20mV, it can't go all the way to supply rail. The firmware interprets anything over 1.9V from the output current amplifier as an over-current condition and immediately disconnects output power.

Two potentiometers are used to adjust the amplifier gain so that the output is 1.8V under the designed maximum current. More precise calibration can performed by entering exact I/V ratios in firmware for increased accuracy. This approach means there is no need for precision shunts or concerns about parasitic resistance: Simply put in a length of wire or more-or-less appropriate value, then adjust the potentiometers and firmware constants to accommodate it. I used aluminium wire, it makes a workable shunt.

There are two other factors which must be calibrated and compensated for.

In total, the calibration process to ensure accurate measurements requires taking three measurements after the charge controller is assembled:

DC offset compensation in the positive direction is fully automatic, and does not need to be manually set. These measurements complicate initial setup, but eliminate the need for high-precision shunts and resistors and most of the constraints on op-amp selection, reducing the cost and making it easier to obtain suitable components.

An important consideration is stability of the reference voltage. See the 'control' section for more on this. It is essential that the 3.3V rail is exactly 3.3V, with high precision, as it serves as the ADC reference.

All of this design is intended to be cheap. Very cheap. Aluminium wire or long PCB tracks rather than mangalin alloy shunts, calibration procedure in place of precision components, and a low-cost op-amp rather than proper instrumentation amplifier or shunt amplifier. Accuracy is compromised to achieve this aim: Perhaps +-10% accuracy may be expected. It's poor, but it's good enough to make the key measurements needed to determine when to switch charge mode and when to trigger over-voltage disconnect, and that's the important part.

A potential refinement would be to run the LM358 off of the common positive supply, rather than the 3.3V rail, with resistors inserted before the ADC inputs. This would allow the full ADC input range to be used, increasing precision.

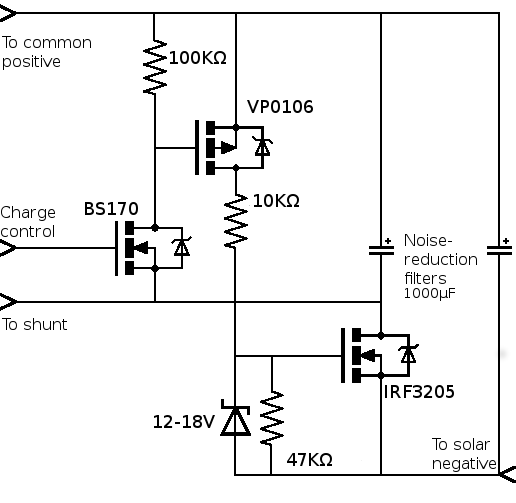

The decision to use N-channel MOSFET for charge control can require some unusual layouts. I have seen three solutions to this problem: A charge pump (As seen in the PWM5), an opto-isolator drive (As seen in my previous design) and this design using an intermediate transistor. I used a P-channel MOSFET as an intermediate, but a PNP bipolar or JFET could also be used. This design also allows the use of a zener clamp to limit Vgs and prevent MOSFET damage. To achieve a sufficiently fast fall time on the gate requires a resistor of at most 47KΩ connected from source to gate. This gives a fall time of 0.2ms - room for improvement, but good enough for the 245Hz PWM frequency used here. I initially tried a 100KΩ resistor, but this lead to excessive switching losses and an overheated MOSFET.

One major advantage of the N-channel MOSFET as a switching element is that, with careful choice of a MOSFET with very low Ron, power dissipation is very low. The IRF3205 is readily available and has an Ron of only 8mΩ - even at 20A, it will dissipate only 3.2W. At 10A, it doesn't even need a heatsink.

A notable omission in my design is the blocking diode. I have left this out to allow some flexibility in use - as hobbyist solar setups are not standardised, not every user needs the diode in the charge controller. I use an external MPPT optimiser which includes this diode, others may wish to use an ideal diode module, or multiple blocking diodes for combining power from many panels. An earlier revision included an ideal diode incorporated into the input switching, but I decided this over-complicated the design.

I have previously designed an ideal diode diode circuit which will compliment this charge controller perfectly. Most of the components are shared.

As this is a PWM controller, not an MPPT, no high-frequency MOSFET drive is needed.

Using PWM to control charging does have a notable drawback: It creates a lot of noise. This noise can be radiated from the panel, battery or load cables. Without filtering this could result in severe noise on the load output. Worse, it also presents on the battery - peak voltages above fifteen volts could have electrochemical effects that will severely shorten battery life. A few well-placed 1000μF capacitors take care of the worst of it.

This design could be adapted for MPPT, but that would require the use of a MOSFET driver chip, a physical layout more suited to high-frequency operation, careful consideration of gate ringing, a large high-current inductor and more sophisticated software. This is why MPPT charge controllers are more expensive. Even so, this charge controller is half-way to being MPPT capable and such extension may be a project for future consideration.

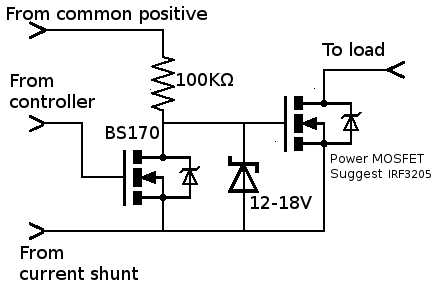

Switching of the load is required primarily to prevent over-discharge of the battery, but is also used to disconnect the load under over-current or manually by pressing the control button. This is also via an N-channel MOSFET, but this time is simpler to drive as it shares the ground potential of the ATmega. A BS170 is again used, but this time just to shift the 3.3V output of the ATmega up to a level that can operate the power MOSFET. While the gate voltage cannot exceed the safe range under normal operation, it may happen if the battery is disconnected or fails, so again a zener clamp is required. Most N-channel MOSFETs will work, but I suggest the use of the IRF3205 again as it has a very low Ron and so minimal heat dissipation - which means tiny heatsink. You might also want to put the MOSFET off-board in order to make it easier to connect thicker wires.

Another capacitor here is used to help smooth the outgoing current, allowing for easier measurement.

One issue to be aware of is the variation in output voltage according to battery voltage - if you power lights off of this directly, their brightness will vary quite noticeably with battery charge. If you want to fix this, you can just put a DC-DC converter module on the output.

Parts of this circuit involve high currents - even a 100W installation for use with a lead-acid battery may go as high as 10A from the panels when charging, and load current could exceed this. Such high currents are difficult to handle on stripboard or PCB without resistive losses. To help overcome this, the load, battery and panel terminals all share a common positive rail. A happy consequence of the decision to use N-channel MOSFETs for power switching, this also makes construction easier as they can all be brought to a common terminal block.

I have used three types of transistor in this design: BS170 N-channel small-signal MOSFET, VP0106 P-channel MOSFET, and IRF3205 power MOSFET. These are all very common, easily available parts - but, should you want to substitute them, this is not difficult. You can replace them with just about any MOSFET of matching channel and, for the power MOSFET, current capacity. The only constraint is that the BS170 is switched by 3.3V logic, and so any substitute must have a suitably low threshold voltage. Alternatively, they could be replaced with a 2N2222 bipolar transistor with appropriate base resistor.

The display I used is the 128x64 monochrome I2C OLED. There are a lot of these little OLED displays available, so if you cannot get the exact one you may need to slightly alter the firmware. You could also replace it with another display device entirely, but this will obviously require substantially more alterations.

The firmware is arduino-based, and can run on an 8MHz 3.3V arduino pro mini. Operating on a 16MHz arduino may require some adjustment due to the higher default PWM frequency.

Download PWM_Solar_Controller.ino.

Lead-acid batteries are a moderately difficult battery to charge properly. Doing so requires precise measurement of the battery voltage, and measurement of current with less precision. This firmware uses a two-state model: A saturation charge that maintains a battery voltage of Vcharge until the current required to do so (subject to a peak follower to discount the effect of passing clouds) falls below a set theshhold Icharged, at which point it transitions to a float charge that maintains a second, lower voltage of Vfloat. Transition to saturation charge comes when the voltage falls below a set voltage (11.0 to 12.0V typical) or after 240 hours, whichever comes first. This charge process is based upon that described on page 73 of the GNB Industrial Power Handbook for Stationary Lead-Acid Batteries, Part 1.

The exact values of these depend upon the battery. Though all lead-acids use the same electrochemistry, variations in such factors as electrolyte concentration and plate alloy material can affect the optimal voltages. If possible, these should all be set in accordance with the datasheet provided by the battery manufacturer in order to maximise battery life - but, if this information is not available, the source code includes 'preset' suggestions that will be approximately correct for flooded batteries, AGM batteries and gelled batteries, based on figures from a BBL guide to battery charging.

| Preset | Vcharge | Vfloat |

| Flooded | 14.4 | 13.4 |

| AGM | 14.6 | 13.4 |

| Gel | 14.2 | 13.6 |

While the voltage sense input does need a simple RC filter to remove the PWM ripple, none is needed for the current sense. To reduce component count, this function is instead performed in software using crude DSP - a number measurements are taken and averaged. For the same reason, debouncing of the control button is done in software. Regulation of the charging current to maintain fixed voltage is done through a simple backoff that lowers the PWM duty cycle as the target voltage is approached.

Datasheets for components used:

LM358 dual operational amplifier.

IRF3205 power MOSFET.

VP0106 P-channel MOSFET.

BS170 or 2N7000 N-channel MOSFET - for these purposes, they are interchangable.

LP2950 3.3V regulator.

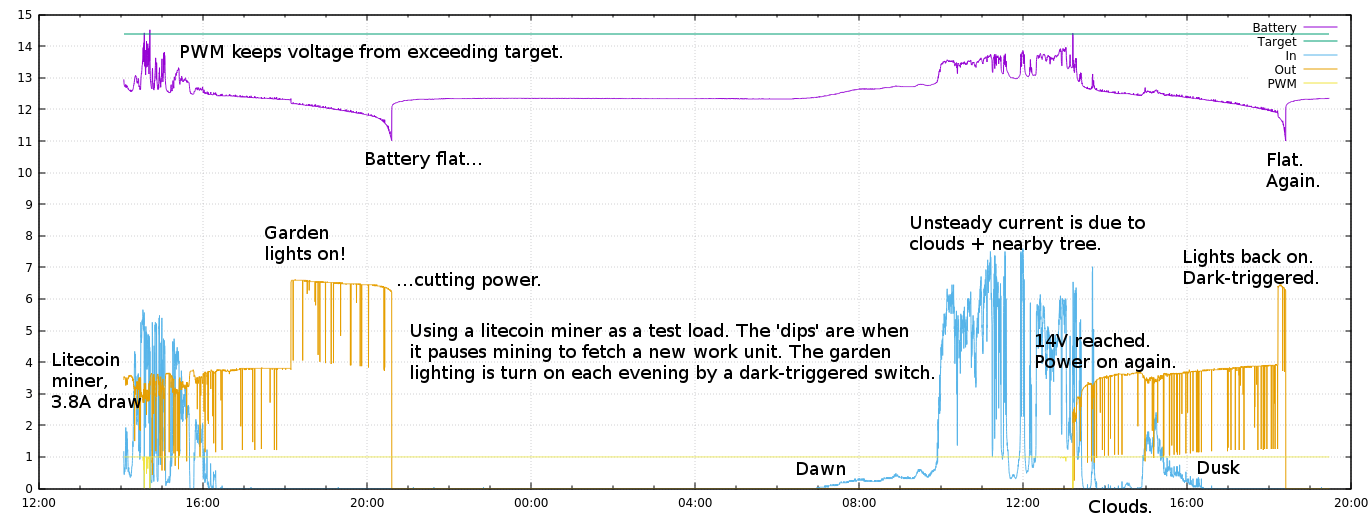

This test shows the performance of the controller during a typical daily cycle. For testing purposes it is connected to two load devices: A litecoin miner which draws 3.5A, with brief dips in consumption while fetching a new work unit, and a string of LED lights which are triggered by a light sensor to turn on after dusk.

The charge controller readings are logged via serial output to a raspberry pi. Current monitoring can be observed working correctly, though power gathered is small - this is only due to poor placement of the panels, as they spend much of the day in shadow from a house and a nearby tree. Additionally the test was performed during a March day of standard British cloudy weather. The current from the panels is too small to properly evaluate the charging process, but the low-voltage disconnect can be observed working correctly. It can also be seen that the battery voltage starts to drop rapidly after around 11.8V, suggesting that raising the disconnect voltage would possibly increase battery life with only a minimal reduction in energy storage capacity. The battery charges the following day until the reconnect voltage is reached, at which point the load is reconnected and litecoin mining resumes. This cycle could be repeated indefinitely, though the 100W panel with poor situation is not sufficient to fully charge the battery under such a heavy load.

It is noticable in the graph that when the charge current increases, the apparent load current decreases. It is not clear if this is due to a poorly designed current monitoring circuit - compromises were made to achieve lower cost and ease of construction - or simply that the internal buck converter in the test load (a Gridseed litecoin miner) draws less current as the supply voltage is raised, and charge currents mean a higher supply voltage to this load.

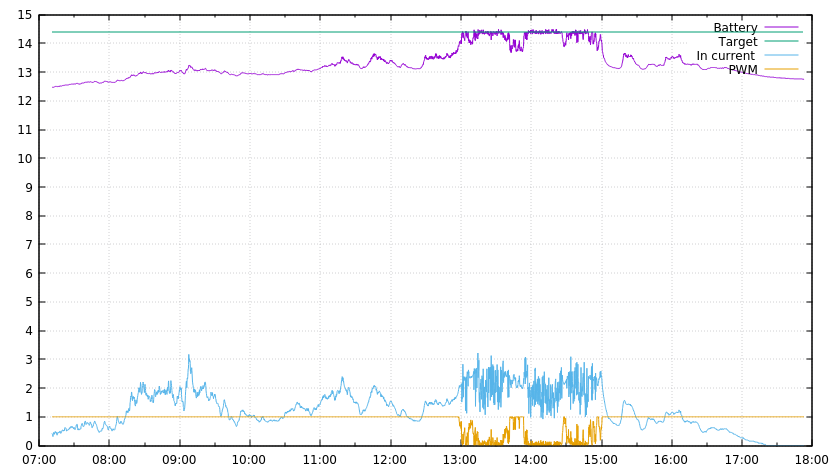

This test shows the PWM function maintaining a steady battery voltage during the charge process. For testing purposes, the load has been disconnected to allow the battery to reach near full charge. A few more hours of sunlight would have been sufficient for the charge controller to switch into 'float' mode and reduce the charging target voltage.

Connecting an oscilloscope across the battery did reveal voltage during charging rose higher than is good for the battery, but another 1000uF capacitor across the terminals was sufficient to smooth out the worst of the PWM. If the ratio of charge current to battery capacity is too high, a pi filter may be needed.

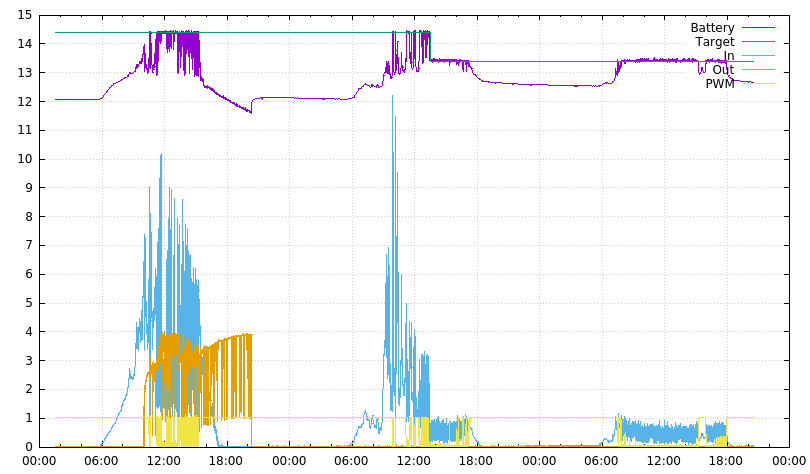

This graph shows the charging process tested over three days. On day one the litecoin miner is used to drain the battery. On the morning of day two this miner was unplugged, allowing the battery to charge without load. The charger maintained it at the target voltage for saturation charge until the current required to maintain this voltage fell below two amps, at which point the target voltage was reduced to the lower float charge level.

This controller design holds some advantages over the earlier attempt, but the current measurement system leaves something to be desired. Accuracy is less than ideal, and it is impossible to fully eliminate an undesired influence of charge-current upon the load-current reading. An ADS1115 may be a better option: This part was initially rejected because it is available only in surface-mount form, but perhaps should be reconsidered. The low-cost shunts and LM358 amplifier, though, are still sufficient for a functional design.

The drive circuitry for using only N-channel MOSFETs for power switching performed perfectly, and shall certainly be retained in any future design. It consumes minimal current and allows for both good efficiency and a very high current handling capacity.

Temperature compensation to maximise battery life is a highly desirable feature, and should also be added. This should be easily possible using either a simple thermistor in a potential divider or the DS18B20 digital temperature sensor popular with hobbyists.

One great deficiency is the lack of MPPT capability, though there is the potential for adding this in a future revision as only the input switching stage and software would need updating, and the microcontroller clock frequency increasing.

PWM imposes a substantial ripple voltage upon the battery. This is not healthy for a battery in charging, and must be corrected using a number of bulky electrolytic capacitors. A higher PWM frequency would reduce the magnitude of this ripple,but would also require minor alterations to the input MOSFET driver.

Even with these issues, the controller is able to perform all the functions expected of a low-cost 12V solar charge controller to an acceptable standard, and with user control of voltage thresholds and battery management combined with the ability to output continuous readings for logging purposes it allows for much more effective optimisation towards improving battery life - features usually only found on a far more expensive controller. The choice of parts allows for it to be produced with ease by a hobbyist, or manufactured at very low cost, and the design is flexible enough to allow easy substitutions. Overall, this is a highly capable and functional design. It is certainly superior to most low-cost off-the-shelf PWM charge controllers.